Today I’m going to show you how to write a PIVOT query when column names are dynamic. A dynamic pivot is very necessary when you don’t know the column names or column names can change with the time or due to any other reason. I’m not going to discuss the pros and cons of the dynamic sql or even the Pivot itself (in my opinion pivoting of data should be handled at the reporting level.). So let see how to write a dynamic pivot query with a very simplified example.

First lets create some sample data:

--------------------------------------------------------------------------------------------------------

-- create table

CREATE TABLE Orders

(

OrderID INT,

ProductName VARCHAR(50),

Quantity INT,

OrderDate DATE

);

-- insert some sample data

INSERT INTO Orders

VALUES

(1, 'Pen', 100, GETDATE()-1),

(2, 'Pen', 200, GETDATE()-2),

(3, 'Pen', 300, GETDATE()-3),

(4, 'Pencil', 500, GETDATE()-1),

(5, 'Pencil', 600, GETDATE()-2),

(6, 'Pencil', 400, GETDATE()-3),

(7, 'Eraser', 30, GETDATE()-1),

(8, 'Eraser', 20, GETDATE()-2),

(9, 'Eraser', 10, GETDATE()-3),

(10, 'Pen', 100, GETDATE()-4),

(11, 'Pencil', 500, GETDATE()-4),

(12, 'Eraser', 30, GETDATE()-4);

-- verify the data

SELECT *

FROM Orders

--------------------------------------------------------------------------------------------------------

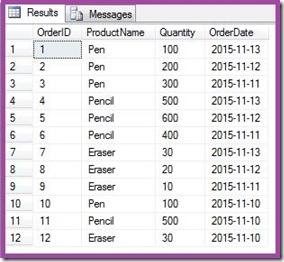

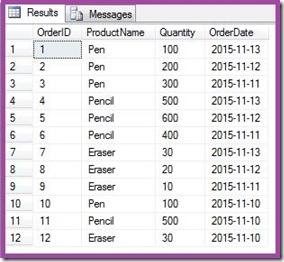

This is how our data looks like:

Requirement: Now I want to Pivot this data on the column OrderDate. I want to show the last 3 dates of OrderDates in the column and respective quantity of each Product under the Date. Here is the expected output, though you need to remember that you will get different column names(dates) as my sample data itself is dynamic:

Now first understand the challenges. Our requirement is to show the last 3 days of OrderDate. Today I’m writing this post that’s why last days are 11 Nov – 13 Nov, but tomorrow I’ll want my query to show the dates 12 Nov – 14 Nov. It will continue to change. Every time changing these dates in our query can be difficult/painful/irritating. And that’s where dynamic SQL comes into the picture. The main challenge is to get these ever changing column names and pass them into the PIVOT block without hard-coding and also into the SELECT clause.

Lets do one thing at a time. First we will try to get the last 3 OrderDates and concatenate them in desired order and format. For that I’m going to use XML PATH. Note that, there could be other methods to concatenate the column names into a single variable. Here is my code to get the column names dynamically into a variable:

--------------------------------------------------------------------------------------------------------

DECLARE @ColumnNames VARCHAR(1000)

SELECT @ColumnNames =

STUFF(( SELECT DISTINCT TOP 100

', ' + QUOTENAME(CONVERT(VARCHAR(10), OrderDate, 120))

FROM Orders

WHERE OrderDate > DATEADD(DD, -4, GETDATE())

ORDER BY ', ' + QUOTENAME(CONVERT(VARCHAR(10), OrderDate, 120)) DESC

FOR XML PATH('')

), 1, 2, '')

-- Just to check how we are getting the column names

PRINT @ColumnNames

--------------------------------------------------------------------------------------------------------

Few notes on above query:

1. DISTINCT is needed because one OrderDate is appearing multiple times in the table.

2. TOP 100 is needed because I want to order the dates in the descending order. Since our query is actually a sub-query, TOP hack is needed. I put the number 100 just randomly, I could have simply used TOP 3 because I want only top 3 dates. When you are not sure about the number of columns you can either put a relatively large number like 100 or TOP 100 PERCENT.

3. In WHERE clause I'm restricting the dates to last 3 dates from getdate() i.e. from "TODAY".

4. I'm also converting OrderDate to varchar and using the style 120 to get the dates in the desired format. In my case I want the dates in yyyy-mm-dd, that's why the style 120.

This is how we get column names from above query: [2015-11-13], [2015-11-12], [2015-11-11]

Now here is our PIVOT query by using dynamic column names created above:

--------------------------------------------------------------------------------------------------------

DECLARE @ColumnNames VARCHAR(1000)

DECLARE @Query VARCHAR(4000)

-- This part is already explained above

SELECT @ColumnNames =

STUFF(( SELECT DISTINCT TOP 100

', ' + QUOTENAME(CONVERT(VARCHAR(10), OrderDate, 120))

FROM Orders

WHERE OrderDate > DATEADD(DD, -4, GETDATE())

ORDER BY ', ' + QUOTENAME(CONVERT(VARCHAR(10), OrderDate, 120)) DESC

FOR XML PATH('')

), 1, 2, '')

-- Preparing our PVIOT query

SET @Query = 'SELECT ProductName, ' +

@ColumnNames + '

FROM

( SELECT ProductName

,Quantity

,OrderDate

FROM Orders

) AS M

PIVOT

(

SUM( Quantity )

FOR OrderDate IN ( ' + @ColumnNames + ' )

) AS P

'

--Executing @Query to get the result

EXECUTE(@Query)

--------------------------------------------------------------------------------------------------------

You may also like to see my other post on Pivot: PIVOT Multiple Columns in SQL Server

Mangal Pardeshi