Everybody has different views about VIEWS, that’s what make them interesting topic to discuss. Other thing is if you make some assumptions about VIEWS, they can lead you to problems. As my target audience is SQL beginners, today I’ll talk about few things about VIEWS so some of the obvious mistakes can be avoided. Actually there are so many things we can talk about VIEWS, but I leave them for future posts.

Today I’ll show what happens when you create a view using “SELECT * FROM TableName” and then ALTER the underlying table used in the VIEW. The normal assumption most of the SQL beginners make is: If you create a VIEW using “SELECT * FROM TableName”, all the changes made in the underlying table will automatically reflect in the VIEW as well.

Case 1: You add a column to the table.

Now here is a question for you: If you have a table named Employees and a VIEW created top of it with simple query “SELECT * FROM Employees”. Now if I add one column to Employees table, will that column appear in result if I execute query “SELECT * FROM View”?

I had ask this question many times in interviews; 80% of time I heard a thumping “Yes”. And most of the candidate were having experience of well over 3 years. Answer to above question is BIG NO. Let me explain this with actual example, 1st lets create some sample data.

------------------------------------------------------------------------------------------------------

-- Create the table with 4 columns

CREATE TABLE Employees

(

EmpID INT,

FirstName VARCHAR(6),

LastName VARCHAR(20),

ManagerID INT

)

GO

-- Populate the table with sample data

INSERT INTO Employees SELECT

1, 'Mangal', 'Pardeshi', 0 UNION ALL SELECT

2, 'Rahul', 'Sharma', 1 UNION ALL SELECT

3, 'Ajay', 'Varma',2

GO

-- Create a View with wild card *

CREATE VIEW Emp AS

SELECT *

FROM Employees

GO

-- Verify the data in the table and view

SELECT *

FROM Employees

GO

SELECT *

FROM Emp

GO

------------------------------------------------------------------------------------------------------

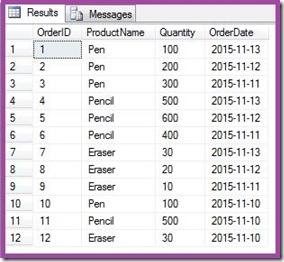

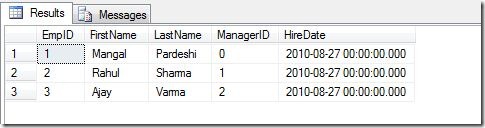

Data in the table and view:

As you can see the data in the Table and View is identical, so are the number of columns. Now lets add one column HireDate in the table and see what happens.

------------------------------------------------------------------------------------------------------

-- Add HireDate Column in Employees Table

ALTER TABLE Employees ADD HireDate DATETIME

GO

-- Update the HireDate column with some date

UPDATE Employees

SET HireDate = '20100827'

GO

-- Again Verify the data in table and View

SELECT *

FROM Employees

GO

SELECT *

FROM Emp

GO

------------------------------------------------------------------------------------------------------

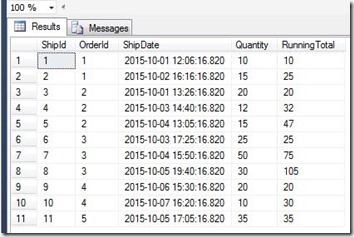

As you see HireDate column didn’t appear in VIEW. So question arises: Why a VIEW doesn't get refresh when I add a column to the table?

Short answer is when you create the VIEW, the column information/definition of VIEW(metadata of VIEW) gets stored in system tables at the time of creation of VIEW. And that metadata doesn’t get refresh when you alter the underlying table. You have to explicitly refresh the metadata of VIEW.

So next question is: How to refresh the VIEW once you modified the underlying table?

There are 2 ways to refresh the VIEW:

1. Using the system stored procedure sp_refreshview

From Books Online - sp_refreshview: Updates the metadata for the specified non-schema-bound view. Persistent metadata for a view can become outdated because of changes to the underlying objects upon which the view depends.

Syntax: EXECUTE sp_refreshview ‘viewname’

2. Or by executing ALTER VIEW statement. When you ALTER the VIEW, SQL Server will pick the latest column definition from underlying table and will update the VIEW metadata.

So in our case we can refresh the VIEW Emp by:

------------------------------------------------------------------------------------------------------

-- 1. using system stored procedure sp_refreshview

EXECUTE sp_refreshview 'Emp'

GO

-- 2. Or by executing the ALTER statement

ALTER VIEW Emp

AS

SELECT *

FROM Employees

GO

-- Verify the data in View

SELECT *

FROM Emp

GO

------------------------------------------------------------------------------------------------------

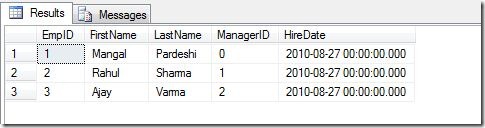

Note you don’t need to execute both the queries, either of the above can do the trick for you. Now you can see the HireDate column in Emp view as well:

Case 2: You drop a column from the table.

Similarly when you drop a column from the table, the VIEW definition doesn’t get updated even though you have used wild card “*” in VIEW definition. Now lets drop the column HireDate from the table and see what happens:

Note: In previous step I refreshed the View after adding the HireDate, so now HireDate is part of Emp view as well.

------------------------------------------------------------------------------------------------------

-- First Verify the data before dropping column

SELECT *

FROM Employees

GO

SELECT *

FROM Emp

-- Drop HireDate column from Table

ALTER TABLE Employees DROP COLUMN Hiredate

GO

-- Verify the data after dropping the column.

SELECT *

FROM Employees

GO

SELECT *

FROM Emp

GO

------------------------------------------------------------------------------------------------------

Now in this case HireDate column will get removed from table but the metadata of the VIEW still have its information stored so you will get the following error on selecting data from VIEW.

Msg 4502, Level 16, State 1, Line 1

View or function 'Emp' has more column names specified than columns defined.

The solution is, again refresh the VIEW definition by executing:

------------------------------------------------------------------------------------------------------

EXECUTE sp_refreshview 'Emp'

GO

------------------------------------------------------------------------------------------------------

Now you won’t get the same error.

Case 3: You drop one or more columns and add equal or more number columns to the table.

This case is even more dangerous as User selecting data from the VIEW can get wrong data under wrong columns and can create confusion. I won’t explain this in detail, just execute following queries and you will realize what I’m saying.

------------------------------------------------------------------------------------------------------

-- Drop column LastName from table

ALTER TABLE Employees DROP COLUMN LastName

GO

-- Add Column DeptName with default value 'IT'

ALTER TABLE Employees ADD DeptName VARCHAR(10) NOT NULL DEFAULT 'IT'

GO

-- Verify the data

SELECT *

FROM Employees

GO

SELECT *

FROM Emp

GO

------------------------------------------------------------------------------------------------------

Now if you see, the LastName column is not present in the table and you can also see the DeptName column in the table. And interesting observation with VIEW is, though the data is exactly matching with the table but columns names are not correct. We again need to refresh the VIEW to correct it.

------------------------------------------------------------------------------------------------------

EXECUTE sp_refreshview 'Emp'

GO

-- Verify the data

SELECT *

FROM Emp

GO

------------------------------------------------------------------------------------------------------

Back to normal now:

What is the solution?

Obvious prevention is don’t use wild card “*” while creating VIEWS. But even listing out columns is just a prevention or I’d say it is a good practice. Because even after listing out the columns, if you drop a column from the table that has been used in any VIEW you will still face problems.

The solution is creating the view using “WITH SCHEMABINDING” option.

From Books Online: Binds the view to the schema of the underlying table or tables. When SCHEMABINDING is specified, the base table or tables cannot be modified in a way that would affect the view definition. The view definition itself must first be modified or dropped to remove dependencies on the table that is to be modified. When you use SCHEMABINDING, the select_statement must include the two-part names (schema.object) of tables, views, or user-defined functions that are referenced. All referenced objects must be in the same database.

Views or tables that participate in a view created with the SCHEMABINDING clause cannot be dropped unless that view is dropped or changed so that it no longer has schema binding. Otherwise, the Database Engine raises an error. Also, executing ALTER TABLE statements on tables that participate in views that have schema binding fail when these statements affect the view definition.

Let me show you one example with SCHEMABINDING:

------------------------------------------------------------------------------------------------------

-- Create View with SCHEMABINDING

CREATE VIEW NewEmp

WITH SCHEMABINDING

AS

SELECT EmpID,

FirstName

FROM dbo.Employees

GO

-- Verify the data

SELECT *

FROM NewEmp

GO

-- Now try to alter the table

ALTER TABLE Employees DROP COLUMN EmpID

GO

------------------------------------------------------------------------------------------------------

Now you will get the error:

Msg 5074, Level 16, State 1, Line 2

The object 'NewEmp' is dependent on column 'EmpID'.

Msg 4922, Level 16, State 9, Line 2

ALTER TABLE DROP COLUMN EmpID failed because one or more objects access this column.

Basically WITH SCHEMABINDING has prevented the change that would affect the view definition.

Additional Information:

A] If you want see the all the dependant objects on particular table you can use following script:

------------------------------------------------------------------------------------------------------

SELECT *

FROM sys.objects AS so

INNER JOIN sys.sql_expression_dependencies AS sed

ON so.object_id = sed.referencing_id

WHERE so.type = 'V' AND sed.referenced_id = OBJECT_ID('dbo.employees') GO

------------------------------------------------------------------------------------------------------

B] The following example creates a script that refreshes the metadata for all views that have a dependency on table dbo.employees:

------------------------------------------------------------------------------------------------------

SELECT DISTINCT 'EXEC sp_refreshview ''' + name + ''''

FROM sys.objects AS so

INNER JOIN sys.sql_expression_dependencies AS sed

ON so.object_id = sed.referencing_id

WHERE so.type = 'V' AND sed.referenced_id = OBJECT_ID('dbo.employees')

GO

------------------------------------------------------------------------------------------------------

Source:

CREATE VIEW (Transact-SQL)

sp_refreshview (Transact-SQL)

Namaste!

- Mangal